-

- 慕九州1469150 2021-11-17

2

- 0赞 · 0采集

-

- 慕九州1469150 2021-11-17

1

- 0赞 · 0采集

-

- 霜花似雪 2019-09-14

from urllib.request import urlopen from bs4 import BeautifulSoup as bs import pymysql.cursors # 打开链接并读取,把结果用utf-8编码 resp = urlopen("http://www.umei.cc/bizhitupian/meinvbizhi/").read().decode("utf-8") # 使用html.parser解析器 soup = bs(resp,"html.parser") # 格式化输出 #print(soup.prettify()) #print(soup.img) # 获取img标签 #print(soup.find_all('img')) # 获取所有img标签信息 for link in soup.find_all('img'): # 从文档中找到所有img标签的链接 #print(link.get('src')) #print(link.get('title')) # 获取数据库连接 connection = pymysql.connect(host="localhost", user="root", password="root", db="python_mysql", charset="utf8mb4") try: #获取会话指针 with connection.cursor() as cursor: #创建sql语句 sql = "insert into `girl_image`(`title`, `urlhref`) values (%s, %s)" # 执行sql语句 cursor.execute(sql, (str(link.get('title')), link.get('src'))) #提交 connection.commit() finally: connection.close()- 0赞 · 0采集

-

- 霜花似雪 2019-09-14

Python操作mysql步骤3

-

截图0赞 · 0采集

-

- 霜花似雪 2019-09-14

Python操作mysql使用步骤2

-

截图0赞 · 0采集

-

- 霜花似雪 2019-09-14

Python操作mysql使用步骤

-

截图0赞 · 0采集

-

- 霜花似雪 2019-09-14

存储数据到MySQL

-

截图0赞 · 0采集

-

- 东大街的仔 2018-07-29

查询数据mysql

- 0赞 · 0采集

-

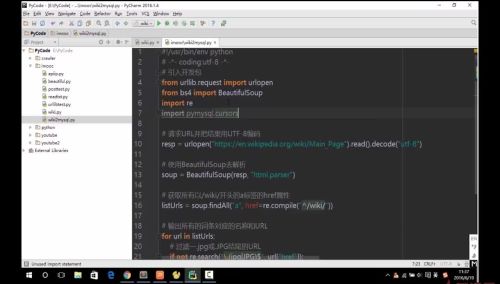

- iphp 2018-04-11

#!/usr/bin/env python # encoding: utf-8 #引入开发包 from urllib.request import urlopen from bs4 import BeautifulSoup import re import pymysql resp = urlopen("https://en.wikipedia.org/wiki/Main_Page").read().decode("utf-8") soup = BeautifulSoup(resp, "html.parser") listUrls = soup.find_all("a", href=re.compile("^/wiki/")) #print(listUrls) connection = pymysql.connect(host='localhost', user='root', password='', db='wiki', charset='utf8') print(connection) try: with connection.cursor() as cursor: for url in listUrls: if not re.search("\.(jpg|jpeg)$", url['href']): sql = "insert into `urls`(`urlname`,`urlhref`)values(%s, %s)" #print(sql) #print(url.get_text()) cursor.execute(sql, (url.get_text(), "https://en.wikipedia.org" + url["href"])) connection.commit() finally: connection.close();- 0赞 · 0采集

-

- 慕九州633462 2018-01-26

- 使用pymysql

-

截图0赞 · 0采集

-

- 慕九州633462 2018-01-26

- pymysql安装

-

截图0赞 · 0采集

-

- Gigure 2017-11-06

- 存储数据到MySQL

-

截图0赞 · 0采集

-

- Gigure 2017-11-06

- 存储数据到MySQL

-

截图0赞 · 0采集

-

- Gigure 2017-11-06

- 存储数据到MySQL

-

截图0赞 · 0采集

-

- Gigure 2017-11-06

- 存储数据到MySQL

-

截图0赞 · 0采集

-

- 聞道 2017-08-04

- 存储数据到MySQL

-

截图0赞 · 0采集

-

- yiran3344 2017-07-13

- 导入数据库代码

-

截图0赞 · 0采集

-

- 慕前端7124754 2017-07-08

- try: with connection.cursor() as cursor: sql = "insert into test(name,url) values (%s,%s)" cursor.execute(sql,(url.get_text(),'https://en.wikipedia.org' + url['href'])) connection.commit() finally: connection.close()

- 0赞 · 0采集

-

- 慕仙9191842 2017-06-03

- 传入mysql语句

-

截图0赞 · 0采集

-

- 慕仙9191842 2017-05-23

- MySQL参数

-

截图0赞 · 0采集

-

- jaymo 2017-05-07

- pymysql相当于一个连接MySQL的工具包,pymysql是帮助我们连接MySQL的,他们当然不会冲突了。

- 0赞 · 0采集

-

- 14数学院姚晓文 2017-04-24

- 关闭连接对象,以节省资源

-

截图0赞 · 0采集

-

- 14数学院姚晓文 2017-04-24

- 获取cursor对象,并使用cursor执行sql语句

-

截图0赞 · 0采集

-

- 14数学院姚晓文 2017-04-24

- 获取CONNECTION对象

-

截图0赞 · 0采集

-

- moocer9527 2016-12-16

- 安装pymysql

-

截图0赞 · 0采集

-

- 林夕31 2016-12-08

- 不错

-

截图0赞 · 1采集

-

- 11点半 2016-11-19

- pip install pymysql

- 0赞 · 0采集

-

- 优秀0 2016-11-06

- 网络带宽非常昂贵

- 0赞 · 0采集

-

- 坚守鲍家街 2016-10-25

- import urllib2 import re from bs4 import BeautifulSoup import pymysql resp = urllib2.urlopen("http://baike.so.com/doc/1790119-1892991.html").read().decode("utf-8") soup = BeautifulSoup(resp, "html.parser") listUrls = soup.findAll("a", href = re.compile("^/doc/")) for url in listUrls: print url.get_text(), "http://baike.so.com"+url["href"] connection = pymysql.connect(host='localhost', user='root', password='', db='360mysql', charset='utf8') try: with connection.cursor() as cursor: for url in listUrls: sql = "insert into `urls`(`name`,`url`)values(%s,%s)" cursor.execute(sql,(url.get_text(),"http://baike.so.com"+url["href"])) connection.commit(); finally: connection.close();

- 4赞 · 5采集

-

- 顾小北 2016-08-27

- 像mysql中插入数据

-

截图0赞 · 0采集

数据加载中...